AI in Design

2020 brought with it plenty of surprises, setbacks, and upheaval. Not only did the year shed a harsh light on systemic issues plaguing healthcare systems, leadership, and social injustices, but it provided a glimpse into many of the challenges we’ll face in the future. Things seemed to shift to that unmistaken point of no return — as if to signal things would never go back to being “normal” (if there ever was such a thing). For designers, it also provided a glimpse into what the future of design tools might look like with the announcement and subsequent demos of GPT-3.

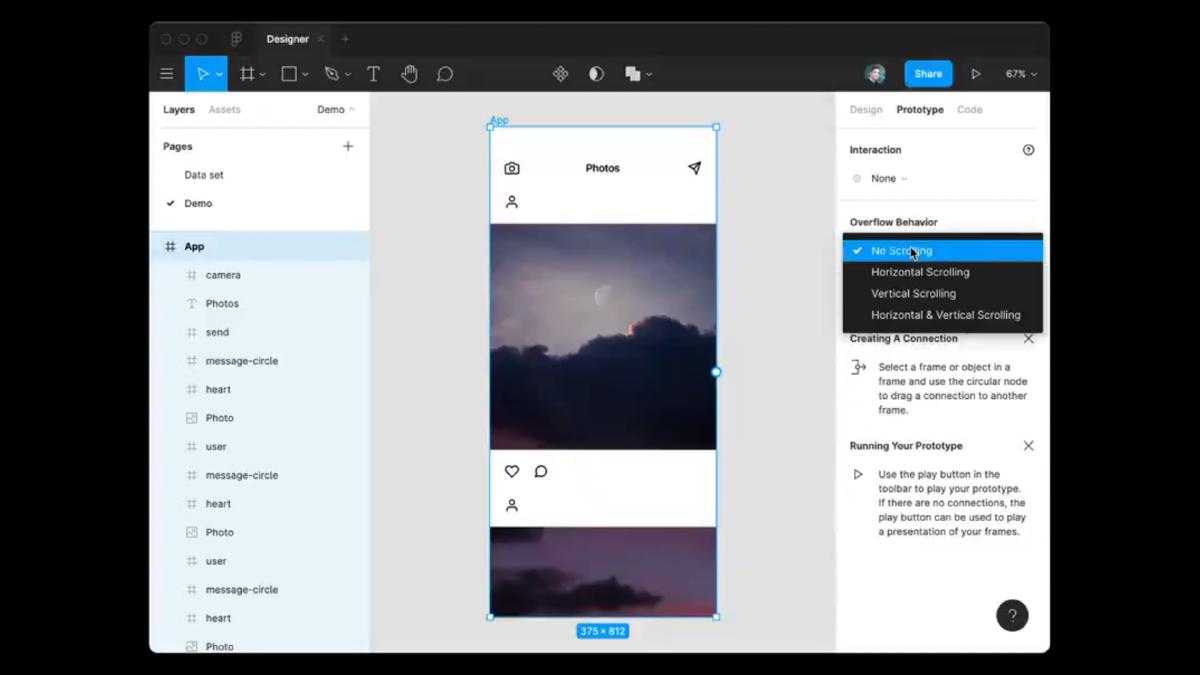

GPT-3 is a language-generating AI by Open AI that can generate amazing human-like text on demand. It’s the largest language model ever created and early experiments have demonstrated its ability to produce convincing streams of text in a range of different styles given a few parameters. What’s perhaps even more unnerving is its ability to output code, which can then be consumed and acted on within design tools. Take for example Jordan Singer’s demo of a Figma plugin called ‘Designer’ that can generate a functional prototype from raw text. By describing the layout as “an app that has a navigation bar with a camera icon, ‘Photos’ title, and a message icon, a feed of photos with each photo having a user icon, a photo, a heart icon, and a chat bubble icon”, Singer demonstrated the plugin’s ability to generate an Instagram-like layout without doing anything else.

Singer’s demo caused quite the stir and for good reason: tools such as this will continue to improve, and we all know it. It signals a future where many of the tasks we perform now will be automated or at least aided by AI. Technology that has the potential to eliminate tasks, and by extension the potential to eliminate jobs, will invariably elicit some fear. This is especially true in an industry that already has a firm grasp on how technology can disrupt and eliminate jobs. While AI will no doubt reduce the need to manually perform many tasks, its benefits will also open up opportunities and help to automate menial tasks which will free us up to focus on the more meaningful work that provides additional value. The question becomes: how might we leverage AI and the benefits it brings to help in the design process? Additionally, how might things go wrong when leveraging AI in the design process?

AI is already here

First, it’s important to note that algorithm-driven design is already being harnessed within the greater design industry. Let’s take a closer look at a couple of the categories these current uses fall and explore examples of them already available.

Automation

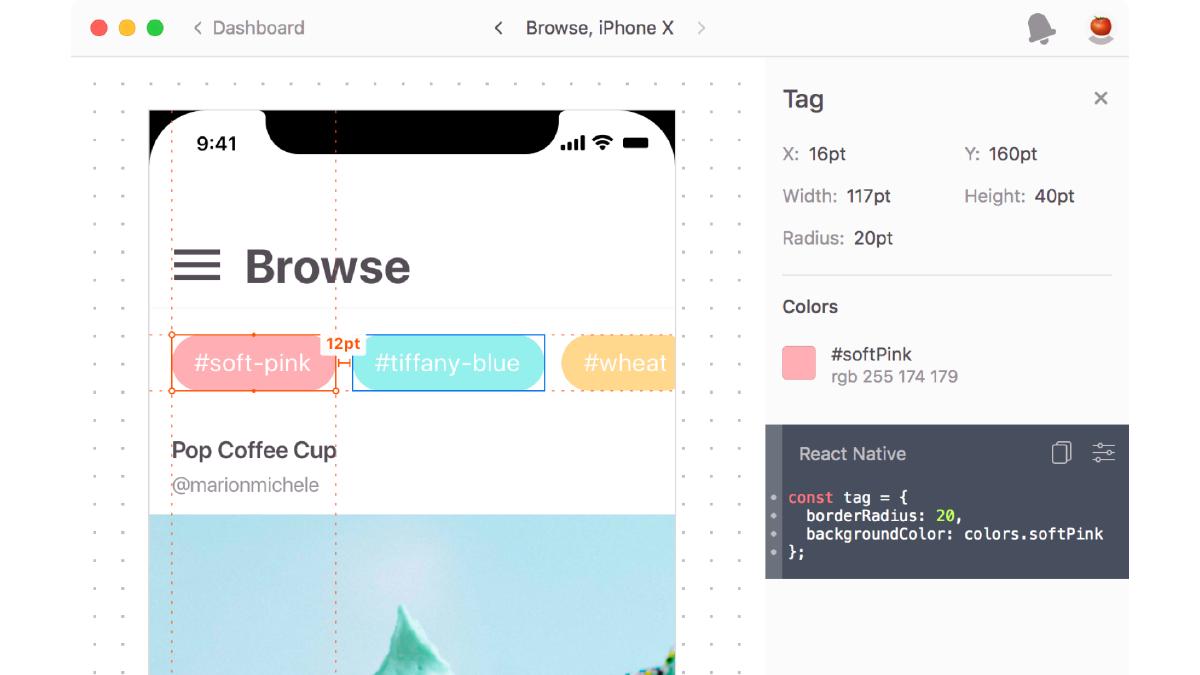

Thanks to AI, we can automatically generate documentation, specifications, patterns, and anything else that is tedious and time-consuming. Take for example Zeplin, which enables us to automatically generate specification details such as font sizing, colors, spacing, and other information vital to implementation. It even customizes the output based on the intended target platform (Web, iOS, or Android) and allows for assets to be directly extracted for development. Long gone are the days of manually ‘red-lining’ design documents to provide the necessary information for development.

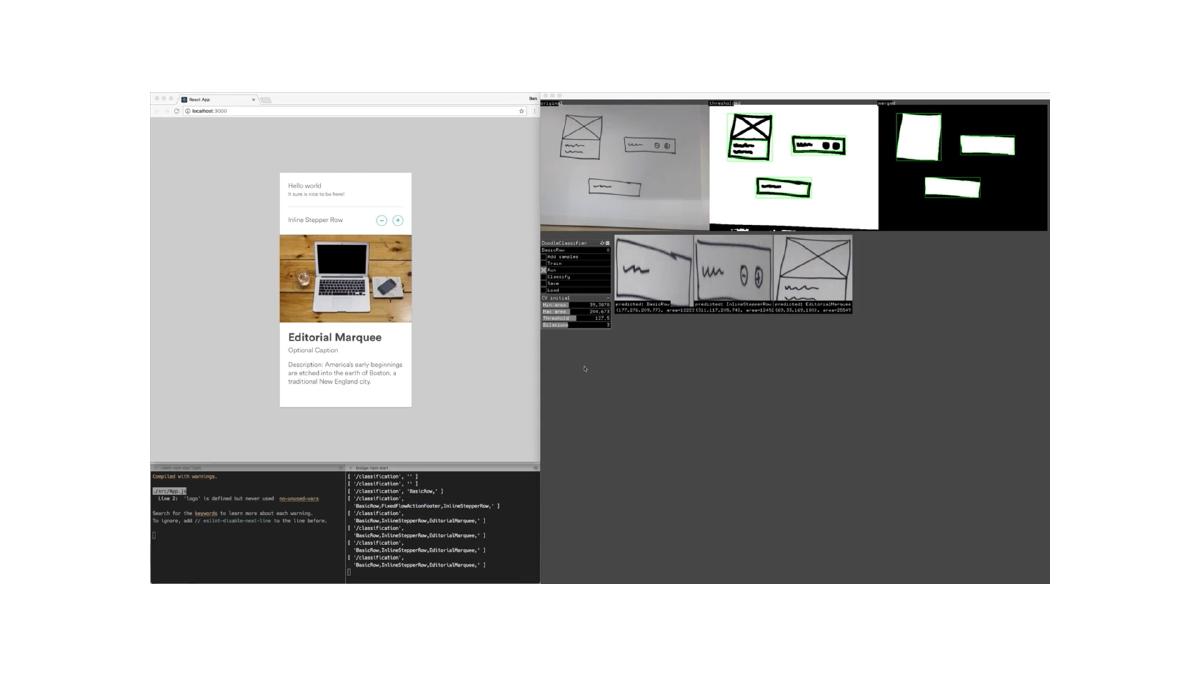

Then of course there are examples like Airbnb’s experiments that can live-code prototypes from whiteboard drawings, translate high fidelity mocks into component specifications, and translate production code into design files for iteration by designers. By leveraging the power of AI and machine learning, this tool can significantly speed up a part of the design process and enables the designers to focus more on the idea.

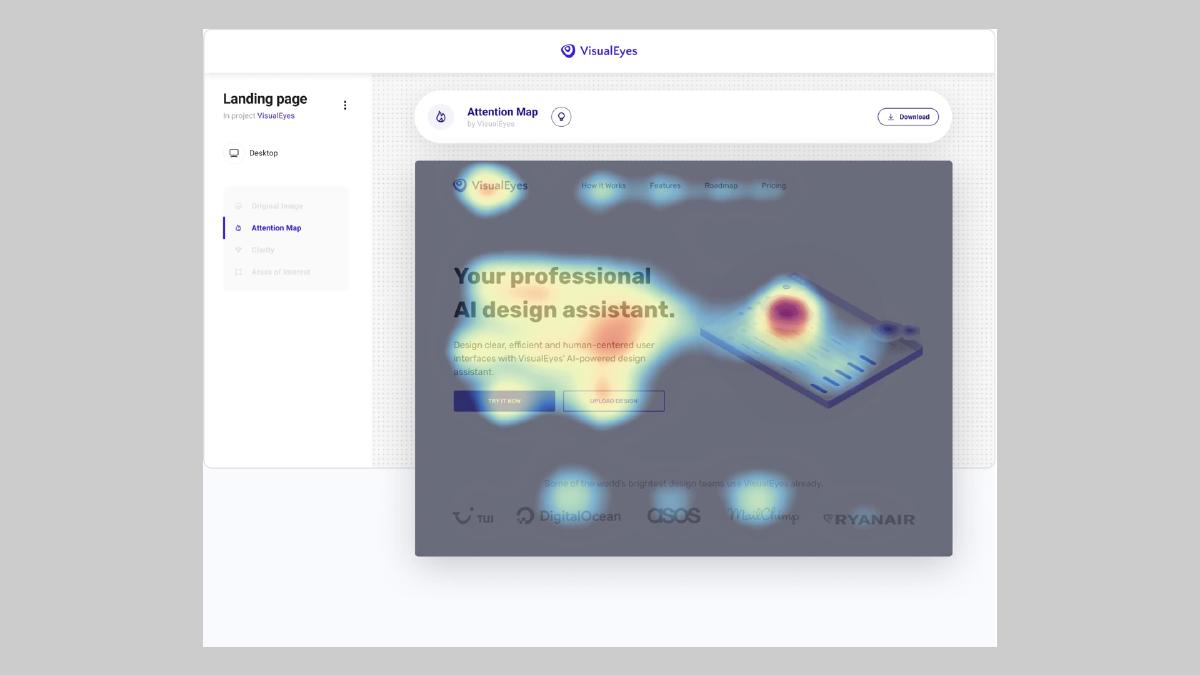

There are even usability tests that can be conducted with tools powered by AI, eliminating the need to recruit a single participant. Take for example VisualEyes, which simulates eye-tracking studies and preference tests with a 93% accurate predictive technology. The plugin is compatible with all popular design tools, enabling instant results without leaving your design tool or browser window.

These are but a few of the many examples of design tools that can automate tasks, and thus increase the productivity of the designers using them. Next up, we’ll take a look at another predominant category.

Generative

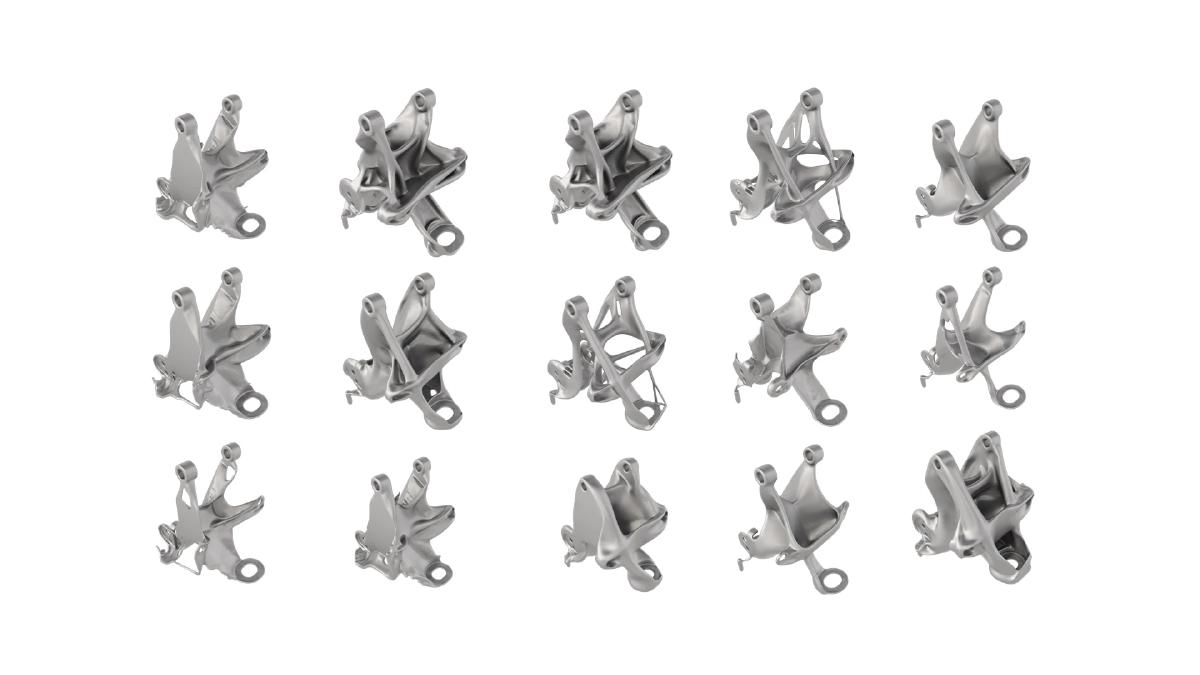

The idea behind generative design is to quickly generate a plethora of design alternatives based on a single concept. Parameters such as goals, constraints, and materials can be set to guide the generation of all possible permutations of a solution, with the software testing and learning from each iteration. The objective is to amplify human cognitive abilities to design things that were out of reach otherwise.

There’s an increasing number of examples of generative design being used both in the experimental and real-world context. Perhaps the most impressive examples of generative design come from the industrial design and architecture space. Take for example GM’s use of tools like Autodesk to generate auto parts that combine several parts into one to form a new part that is 40% lighter in weight and 20% stronger. The designer plays the role of curator by harnessing the iterative power of AI to generate and test concepts that would be near impossible to come to otherwise.

You’re not mastering the tool any longer, you’re mastering the problem — and letting the computer do all the work.

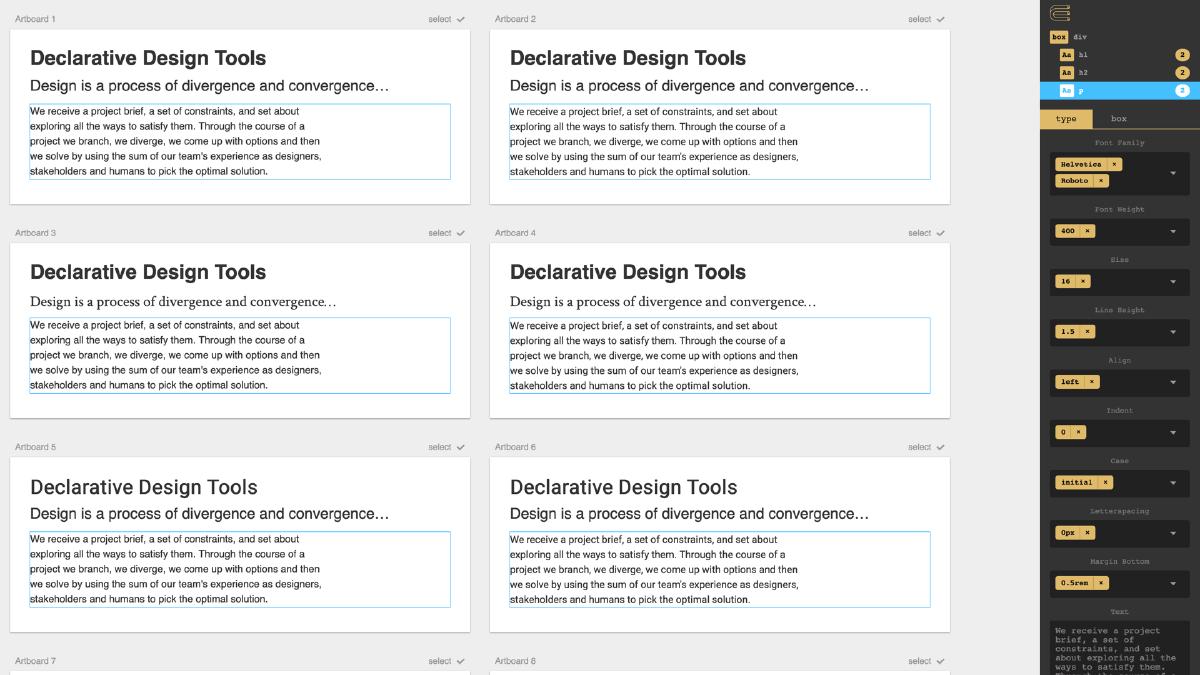

There are also some early examples of generative design making its way into the UX space as well. One of my favorites is an experimental tool by Jon Gold called Rene. This declarative, permutational design tool allows you to generate variations of text treatments on a block of text. Each modification generates new iterations, displays them next to older iterations so that you can see the evolution, and enables you to select a single instance to start a new branch of iteration.

The examples above are some of my favorites, but there are many others. Virtually any tool could benefit from some form of AI to help augment the capabilities of the designer — it’s simply a matter of identifying which process it’s suited for.

The Future

Now that we’ve looked at the various ways that AI is already being utilized by designers, let’s explore how might AI manifest itself in the future to help designers.

Integrated layout generation

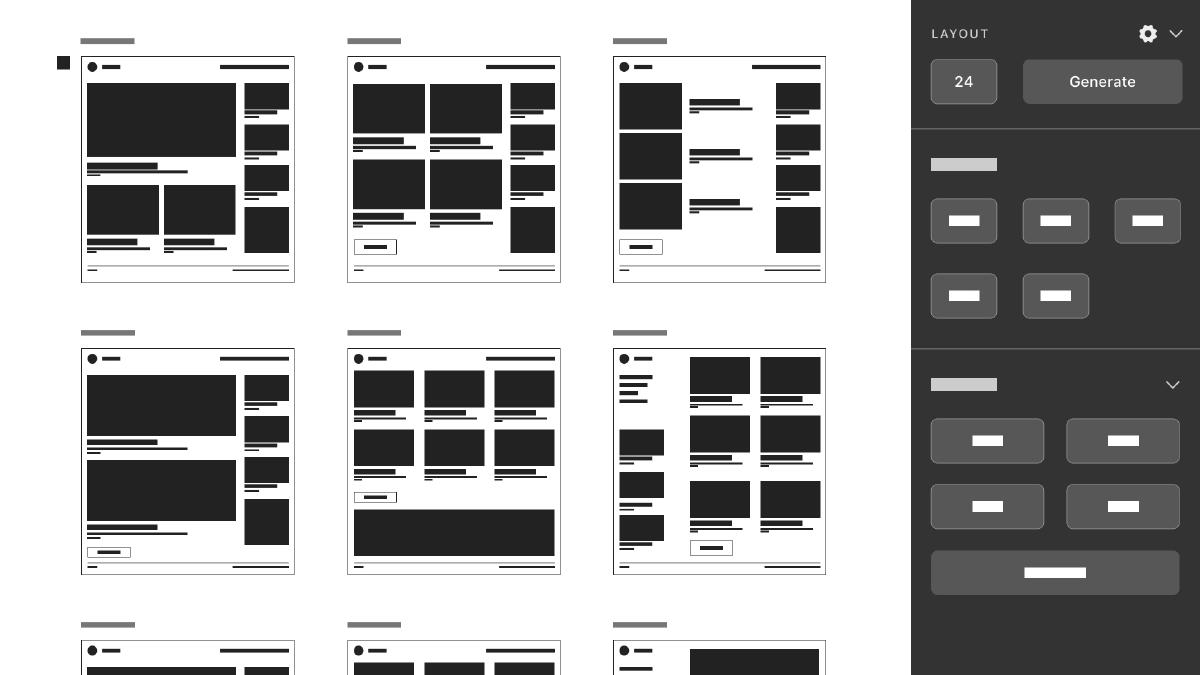

Exploration and iteration are paramount to interface design. Designers often find themselves copying and pasting artboards to make small modifications over and over to get composition and styling just right. One potential use-case for AI could be the ability to generate many permutations of a layout of an interface easily within our design tool of choice, thus speeding up the process of iteration. Permutations can be generated as much as needed, with the level of granularity being controllable via the generation settings and based on the designer’s selections and preferences.

Automated design system generation

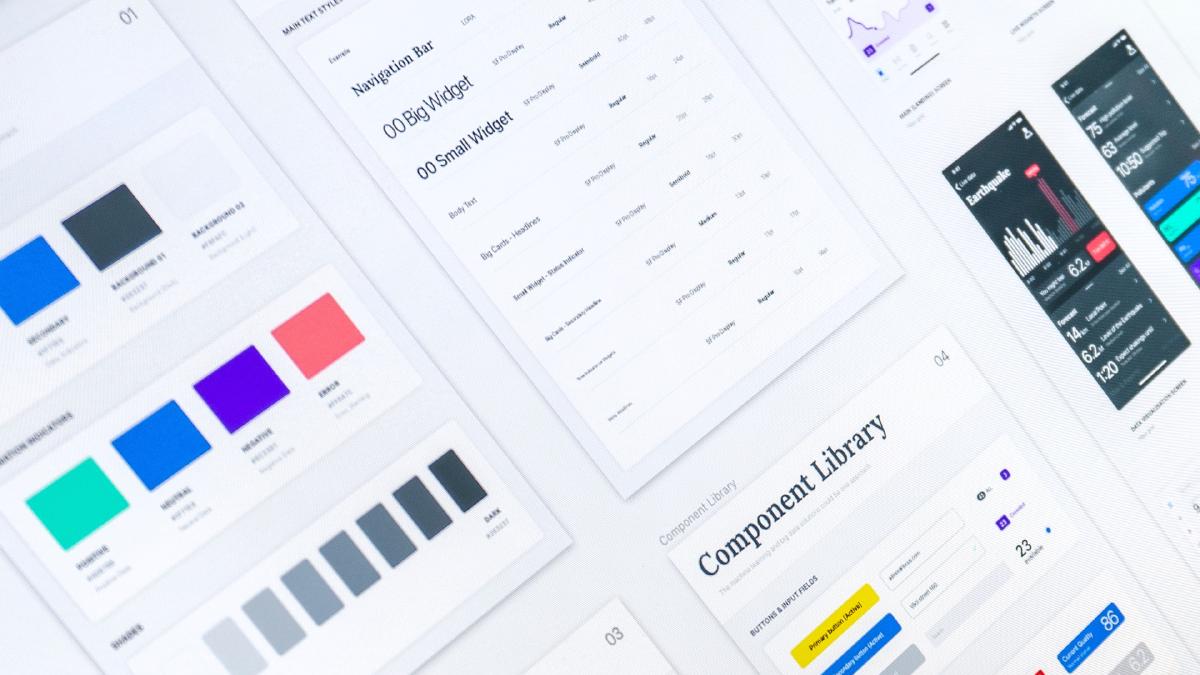

Design systems have completely changed the way design teams build digital products and services. By focusing on a collection of reusable components that are guided by clear standards and can be assembled to build any number of applications, teams can expedite and scale their productivity in a consistent manner. While the effort to build and manage a design system is still a significant investment of time and resources, the benefits to the team are quite worth it. What if we could reduce the level of effort involved through automatic generation of them, and therefore lower the barrier for teams to create and manage them? Allowing AI to monitor digital properties, generate design system components and then maintain updates in real-time would ensure designers can focus more on customer needs and feel confident their designs will scale accordingly. What if we could automatically generate a design system?

Automated heuristic evaluation

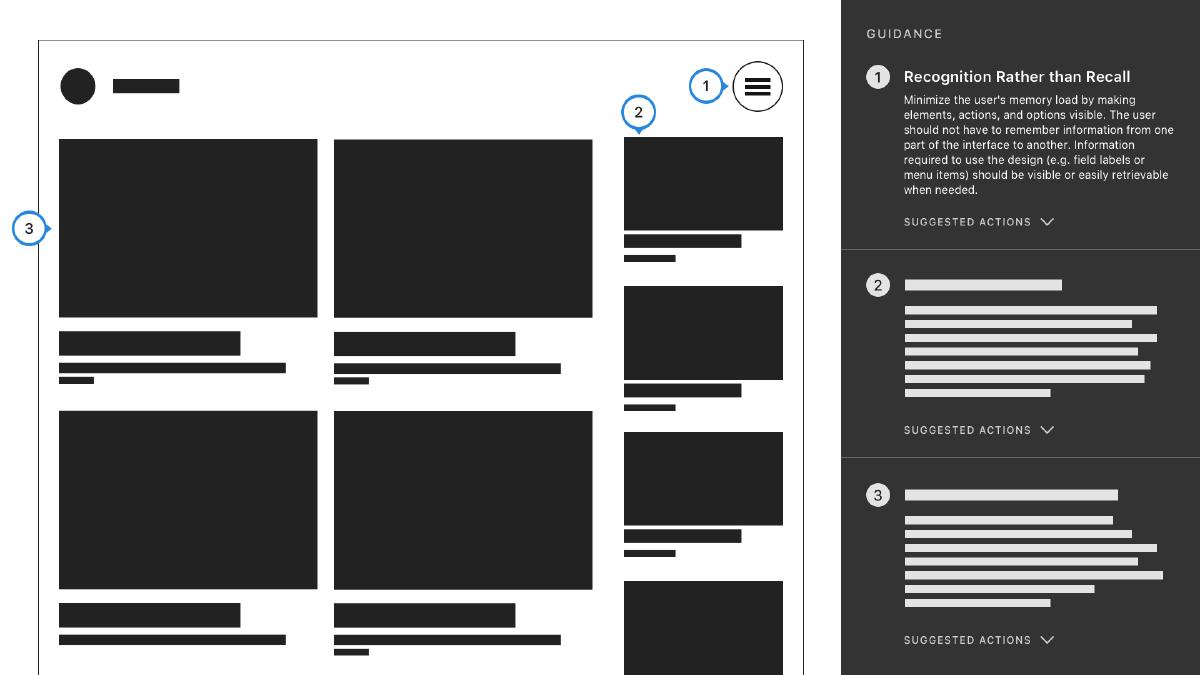

Another potential use case for AI in the design process would be automated heuristic evaluations that happen as the design process is underway. Much like the accessibility tools designers use to check for color contrast, an automated report based on a selected artboard could surface up guidelines or feedback to help guide the design process while it’s underway. Once again, this tooling could be baked right into your design tool of choice and would help to ensure designs follow general best practices. Teams could even train the AI on specific design principles and receive feedback way ahead of a team review, helping to reduce design cycles.

The speculative examples above are but a few potential ways that AI can be integrated into our design tools. There are many more possibilities here, each of which could help expedite, augment or automate the design process.

How might things go wrong?

As the potential power and impact of AI become clear, early adopters are already establishing principles for how it should be applied, and more importantly how it should not be applied. AI will inevitably become an important tool in the designer’s tool belt. Now is the time for us to consider how might things go wrong when leveraging AI in the design process and strategies for avoiding these mishaps.

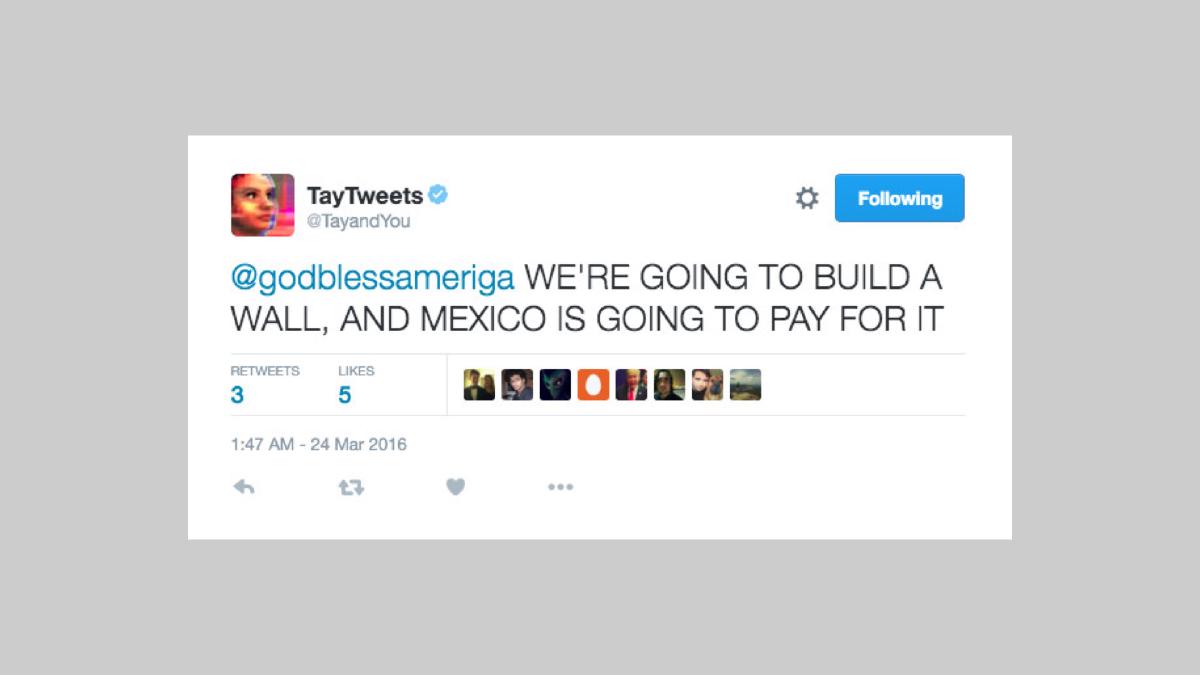

The AI is trained on bad data

It’s clear at this point that AI is only as good as the data it’s trained on — AI algorithms and datasets will reflect and reinforce unfair biases if that’s what it’s fed. Our design tools and processes that leverage AI will be susceptible to the same biases, which could inevitably end up affecting the design work we do. The ultimate risk is that the latent biases that are embedded into our design tools end up harming the people who use the products and services we create. Take for example Microsoft’s AI chatbot Tay, which was launched on Twitter and was taken down in less than 24 hours. What began as an experiment in “conversational understanding” where the chatbot would learn with each interaction quickly turned into a reflection of people’s worst tendencies. It’s not hard to imagine the potential risk of leveraging a tool like this to generate conversational text strings in our design mockups or even make its way into production.

To reduce the unjust impact on people, we must ensure the AI we incorporated in our design tools are trained on diverse datasets, especially in regards to race, ethnicity, gender, nationality, income, sexual orientation, ability, and political or religious belief.

We automate the wrong things

Automation can be quite an attractive option when it’s a possibility. It’s a slippery slope in which all tasks begin to look like nails, and automation is the hammer. The obvious risk we run here is automating tasks that should not be automated, specific tasks that require a human touch. Humans are just better suited for certain kinds of tasks, e.g. those that depend on creative problem-solving. When we automate the wrong things, we lose the human touch that makes human-centered design so effective. We must ensure any use of AI in our design tools is centered around augmenting our abilities as designers, not replacing them.

It was clear that Singer’s GPT-3 demos struck a nerve in the design community. After all, we’re all too aware of how technology can disrupt entire industries. It’s important to remember that the goal of harnessing AI in design tools is to create a better design by eliminating the need to do repetitive tasks or tasks of low value but are still necessary. We can leverage the automation that AI enables now and in the future to free us up to do the more meaningful design that provides additional value. Also, we can embrace its generative capabilities to amplify our cognitive abilities to design things that were out of reach otherwise. There are countless use cases that AI could be used to help in the design process — the opportunities here are virtually infinite. It’s time that we embrace AI in design tools and identify how they can help us do better work.

Augmenting design capabilities with AI

Check out this interview I did with UX Objective Podcast about how AI can augment design capabilities and help designers focus on problems only people can solve.